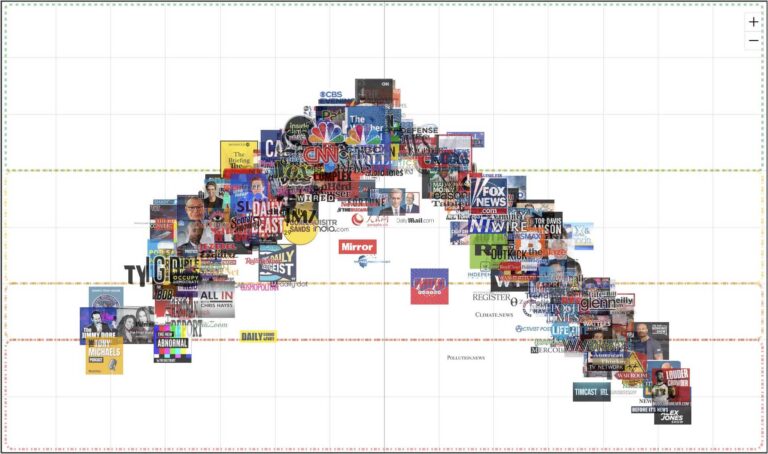

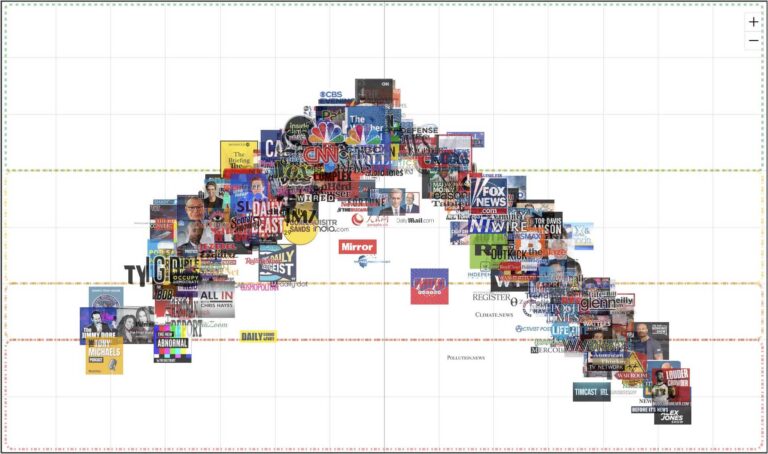

Interactive Media Bias Chart®

Use search box to isolate the displayed sources and see their individual articles or episodes

See More with Premium and Pro

The following description is a summary of our methodology. For more detail, please see our Methodology page.

Overall news source scores are generated based on scores of individual articles. Each article is rated by at least three human analysts with balanced political viewpoints: one who self-identifies as right-leaning, one as center-leaning, and one as left-leaning. Sometimes articles are rated by larger panels of analysts for various reasons.

For each news source, we pick a sample of articles that are most prominently featured on that source’s website over several news cycles. We typically have at least 15 articles rated per web source, but for larger sources (such as the New York Times and Washington Post) we have over 100 articles in our sample. We rate all types of articles, including those labeled analysis or opinion by the news source, and the dominant factor for how we select articles from a page is prominence.

Each overall source score is a weighted average of the individual article scores. Our weighting algorithm captures the effect that individual articles with low reliability or high bias have on overall perceptions of the news source.

Each analyst has gone through extensive training on Ad Fontes Media’s rating methodology, which is based on content analysis of articles.

Each analyst rates each article on three individual reliability sub-factors of 1) Expression, 2) Veracity, and 3) Headline/Graphic, and then the analyst gives the article an “Overall” reliability rating. Each of these ratings are on a numerical scale between 0-64, with 0 being the least reliable and 64 being the most reliable.

Each analyst also rates each article on three individual bias sub-factors of 1) Language, 2) Political Position, and 3) Comparison, and then the analyst gives the article an “Overall” bias rating. Each of these ratings are on a numerical scale between -42 (left) and +42 (right).

The analysts’ scores are then averaged, and the average score is shown on the chart.

Comscore’s Total Digital Population Data (provided by Comscore Media Metrix®, representing an unduplicated measure of digital audiences across desktop and mobile platforms) is viewable as a proportionally-sized bubble around each news/information source on the Media Bias Chart. Pro users who purchase the Comscore add-on can view and download numerical data as well.

We hope you enjoy exploring our data on the Media Bias Chart, and we’ll keep adding as much as we can to it as our company grows. If you want to help us grow and get access to the most updated downloads of the Media Bias Chart all the time, please consider becoming a member!

The Interactive Media Bias Chart® (“IMBC”) is a data visualization displaying both bias and reliability ratings for news and “news-like” content. Source types include Web/Print, Podcast, and TV/Video Programs. Our politically balanced team of human analysts allow us to continually add new rated sources and update current ones.

Please note: Comscore audience size data is only available as additional add-on for pro editions of the chart.